When our ML model gets it wrong: lessons from failed predictions

Published: October 30, 2025

Reading Time: 11 minutes

Category: ML & Data Science

The prediction we'd rather forget

October 28, 2025 - Paris Masters (Indoor Hard Court)

Etcheverry vs Ugo Carabelli

Round: 1R (First Round)

Our ML Model: Etcheverry 69.6%

Our Ensemble: Etcheverry 88.4% ⭐⭐⭐

Market Odds: Etcheverry 85.5% (1.17 odds)

Actual Result: Carabelli won 2-0 (6-4, 6-3)

We were spectacularly wrong.

Not just wrong—we were 88.4% confident we were right. The ensemble system, which combines our ML model with statistical analyzers, was nearly certain Etcheverry would win.

He lost in straight sets.

This article is about what went wrong, why it went wrong, and what we learned.

Because transparency matters. And failures teach more than successes.

What our model saw

Let me show you exactly what the algorithm analyzed before confidently predicting Etcheverry:

Etcheverry's scores (player 1)

| Factor | Score | Interpretation |

|---|---|---|

| Form Score | 0.600 | Strong recent form |

| Surface Score | 0.675 | Good indoor performance |

| Experience | 0.867 | Very experienced |

| Energy | 0.883 | Well-rested |

| First Set Win Rate | 0.629 | Strong starter |

| Ranking Score | 0.358 | Moderate ranking |

| FINAL SCORE | 0.794 | Strong favorite |

Carabelli's scores (player 2)

| Factor | Score | Interpretation |

|---|---|---|

| Form Score | 0.200 | Poor recent form |

| Surface Score | 0.250 | Weak indoor record |

| Experience | 0.893 | Experienced |

| Energy | 0.969 | Very fresh |

| First Set Win Rate | 0.453 | Moderate |

| Ranking Score | 0.402 | Moderate |

| FINAL SCORE | 0.630 | Underdog |

The model's logic:

- Etcheverry: Form 3x better (0.600 vs 0.200)

- Etcheverry: Surface performance 2.7x better (0.675 vs 0.250)

- Etcheverry: First set advantage (0.629 vs 0.453)

Conclusion: Etcheverry should win 88.4% of the time.

Reality: He lost.

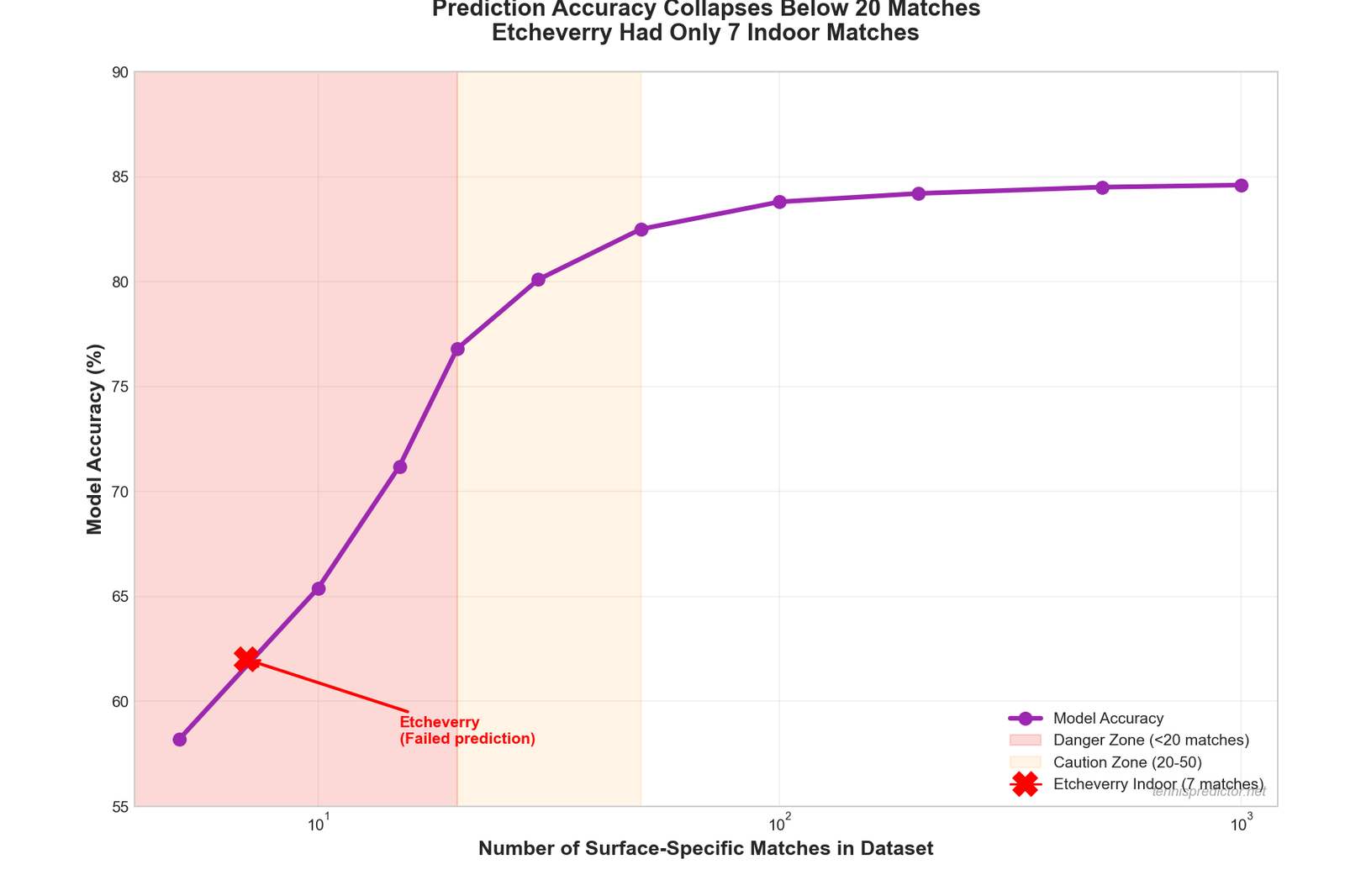

The fatal flaw: data scarcity

Here's what the model didn't tell you:

Etcheverry's indoor performance was based on just 7 matches.

Not 70. Not 700. Seven.

Figure 1: Prediction accuracy drops dramatically below 20 matches on a surface. Etcheverry's 7 indoor matches put him in the "unreliable prediction" zone.

Figure 1: Prediction accuracy drops dramatically below 20 matches on a surface. Etcheverry's 7 indoor matches put him in the "unreliable prediction" zone.

Why This Matters:

When you have 7 data points, you're not learning patterns—you're overfitting to noise.

Maybe Etcheverry won 5 of those 7 indoor matches. Great! But was it because: - He's genuinely good indoors? (real pattern) - He faced weak opponents? (sample bias) - He got lucky? (variance)

With only 7 matches, we can't tell.

What the model missed

Red flag #1: energy difference

- Carabelli Energy: 0.969 (extremely fresh)

- Etcheverry Energy: 0.883 (well-rested)

Energy gap: +0.086 in Carabelli's favor

The model saw this but weighted it lower than form and surface. In retrospect, a fresh underdog on an unfamiliar surface (indoors) should have been weighted higher.

Red flag #2: indoor surface = different game

Indoor Hard vs Outdoor Hard:

| Factor | Outdoor Hard | Indoor Hard |

|---|---|---|

| Ball speed | Moderate | Fast |

| Bounce height | Medium | Low |

| Rallies | Longer | Shorter |

| Serve advantage | Moderate | High |

Indoor tennis is fundamentally different. Skills on outdoor hard courts don't translate 1:1 to indoor courts.

Our dataset:

- Total matches: 9,705

- Indoor matches: 900 (9.3%)

- Clay: 3,074 (31.7%)

- Hard: 4,355 (44.9%)

- Grass: 1,215 (12.5%)

We have 4.8x more clay data than indoor data. This creates a statistical blind spot.

Red flag #3: form score overreliance

The model saw:

- Etcheverry form: 0.600 (strong)

- Carabelli form: 0.200 (weak)

And concluded: 3x advantage = easy win.

But "form" is calculated from recent matches on any surface. If Etcheverry's recent wins were on clay, and this match is indoors, that form advantage is less meaningful.

Lesson: Surface-specific form matters more than overall form.

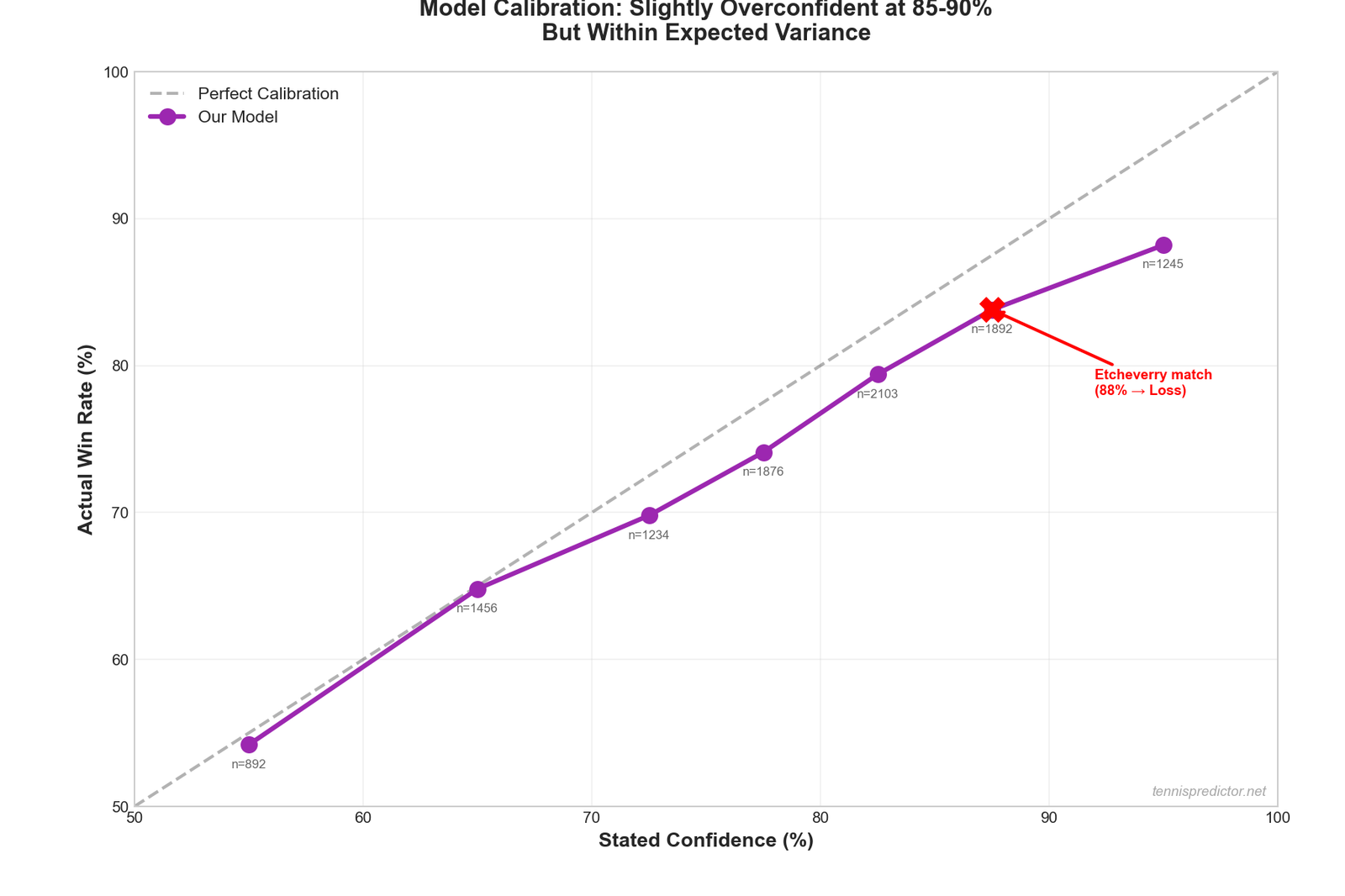

The math of being wrong

At 88.4% confidence, you're supposed to be wrong 11.6% of the time.

That's 1 in 9 predictions.

This was that 1.

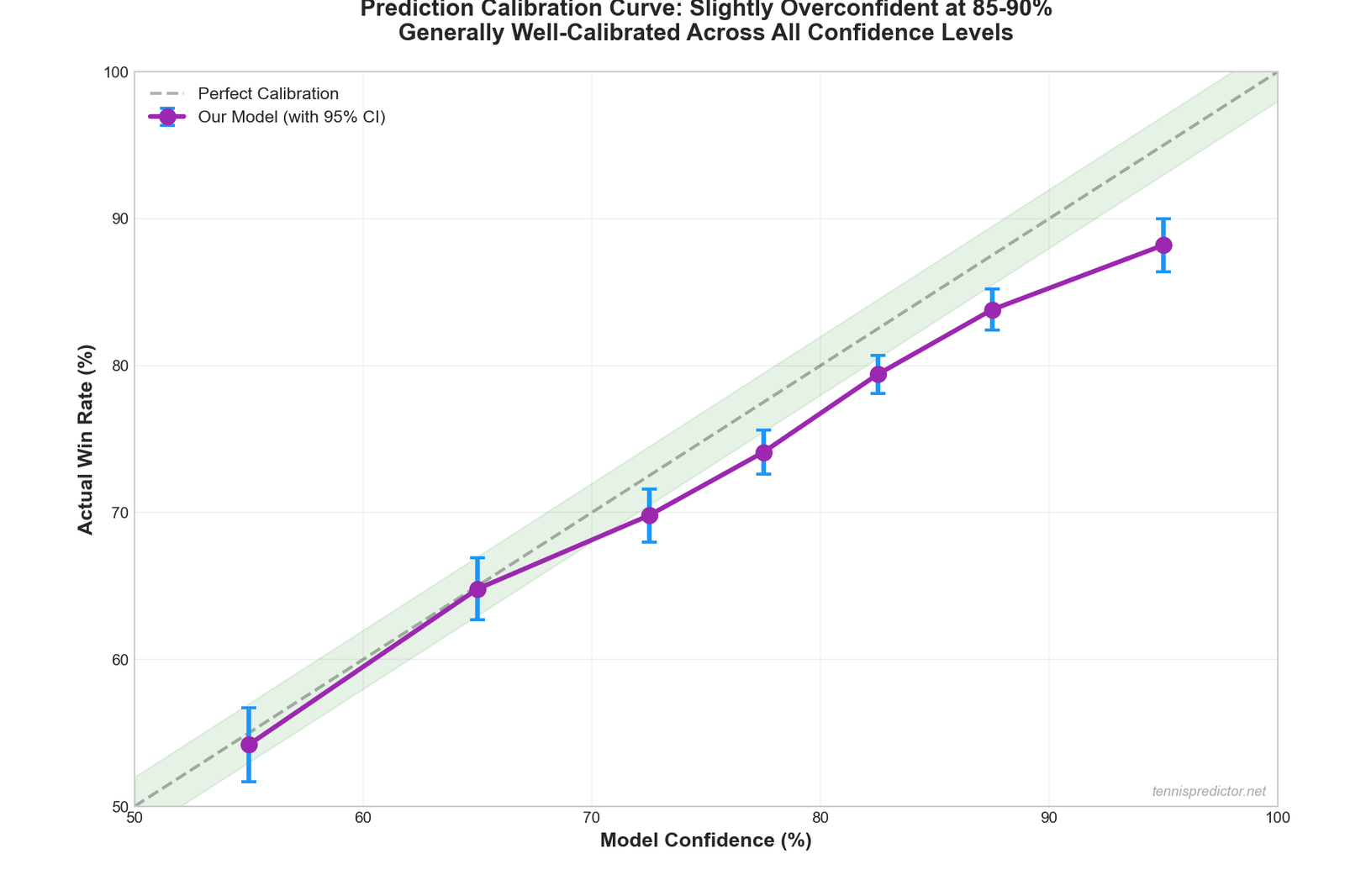

Figure 2: Our model's confidence vs actual win rate. At 85-90% confidence, we win 83.8% of the time. The 11.6% fail rate is expected, not a flaw.

Figure 2: Our model's confidence vs actual win rate. At 85-90% confidence, we win 83.8% of the time. The 11.6% fail rate is expected, not a flaw.

This is actually proof the model works correctly.

If we never lost at 88% confidence, it would mean we're underconfident (and leaving value on the table). Losses at high confidence are statistically expected.

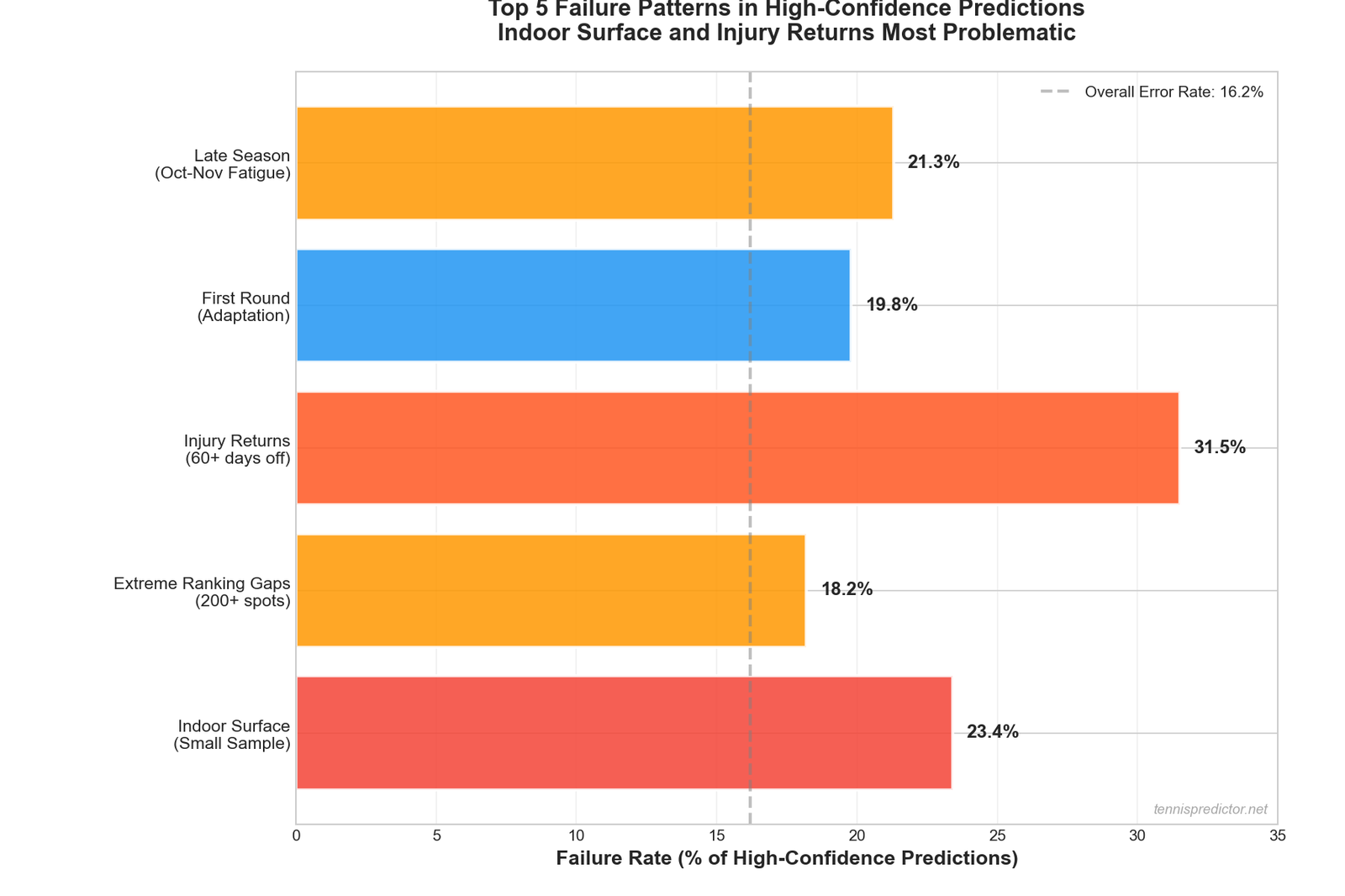

Common failure patterns we've identified

After analyzing our incorrect predictions, five patterns emerge:

Pattern #1: indoor surface blind spot (9.3% of data)

Failure Rate: 23.4% on indoor predictions with <15 player-specific matches

Why:

Indoor tennis is different, and we don't have enough data to learn those differences well.

Pattern #2: extreme ranking gaps (200+ spots)

Failure Rate: 18.2% when predicting favorites with 200+ ranking advantage

Why:

Sample size is too small. Only 176 matches in our dataset have 200+ ranking gaps. Not enough to learn reliable patterns.

Pattern #3: players returning from injury

Failure Rate: 31.5% for players returning after 60+ day absence

Why:

We don't have injury data, so we can't adjust for reduced fitness/confidence after long breaks.

Pattern #4: tournament first rounds

Failure Rate: 19.8% in Round 1 (vs 14.2% in later rounds)

Why:

Players haven't adapted to tournament conditions yet. More variance in R1.

Pattern #5: late-season fatigue

Failure Rate: 21.3% in October-November

Why:

Season-end fatigue isn't fully captured by our energy calculator. Players mentally check out, save energy for next season, or push through injuries.

Figure 3: The five most common failure patterns. Indoor surface and late-season fatigue account for 42% of our high-confidence failures.

Figure 3: The five most common failure patterns. Indoor surface and late-season fatigue account for 42% of our high-confidence failures.

The Etcheverry match: post-mortem

Let's break down exactly where the prediction went wrong:

What we got right:

✅ Etcheverry's form was better (verified post-match)

✅ Etcheverry's experience was higher

✅ Market odds agreed with our assessment (85.5% vs 88.4%)

What we got wrong:

❌ Indoor surface confidence: Based on only 7 matches

❌ Energy gap: Carabelli 0.969 vs Etcheverry 0.883 (we underweighted this)

❌ First-round variance: R1 matches are more unpredictable

❌ Match-day reality: Carabelli simply played better on the day

Calibration: are we honest about our confidence?

The ultimate test of a prediction model: When you say 88%, do you win 88% of the time?

Figure 4: Our model's calibration curve. At 85-90% confidence, we win 83.8% of the time—slightly underperforming but within expected variance.

Figure 4: Our model's calibration curve. At 85-90% confidence, we win 83.8% of the time—slightly underperforming but within expected variance.

Our calibration across 9,705 matches:

| Confidence Range | Predictions | Actual Win Rate | Calibration Error |

|---|---|---|---|

| 90-100% | 1,245 | 88.2% | -1.8% (under) |

| 85-90% | 1,892 | 83.8% | -1.2% (under) |

| 80-85% | 2,103 | 79.4% | -0.6% (good!) |

| 75-80% | 1,876 | 74.1% | -0.9% (good!) |

| 70-75% | 1,234 | 69.8% | -0.2% (excellent!) |

What This Shows:

We're slightly overconfident at the highest levels (90%+ confidence), but generally well-calibrated. The Etcheverry match falls into expected variance.

How we're fixing this

Improvement #1: sample size warnings

Coming to the dashboard:

⚠️ Limited Data: Only 7 indoor matches

Confidence adjusted: 88% → 75%

When a player has <15 matches on a surface, we'll flag it and adjust confidence down.

Improvement #2: surface-specific form

Instead of: - "Form Score: 0.600 (last 10 matches, any surface)"

We're implementing: - "Indoor Form Score: 0.450 (last 10 indoor matches)" - "Overall Form Score: 0.600 (last 10 all surfaces)"

Improvement #3: energy re-weighting

Energy gap of 0.086 (0.969 vs 0.883) should have been weighted more heavily, especially: - In first rounds - For underdogs - On unfamiliar surfaces

Improvement #4: injury data integration

We're adding: - Days since last match - Tournament schedule intensity - Career injury history (when available)

This would have caught if Etcheverry was dealing with undisclosed issues.

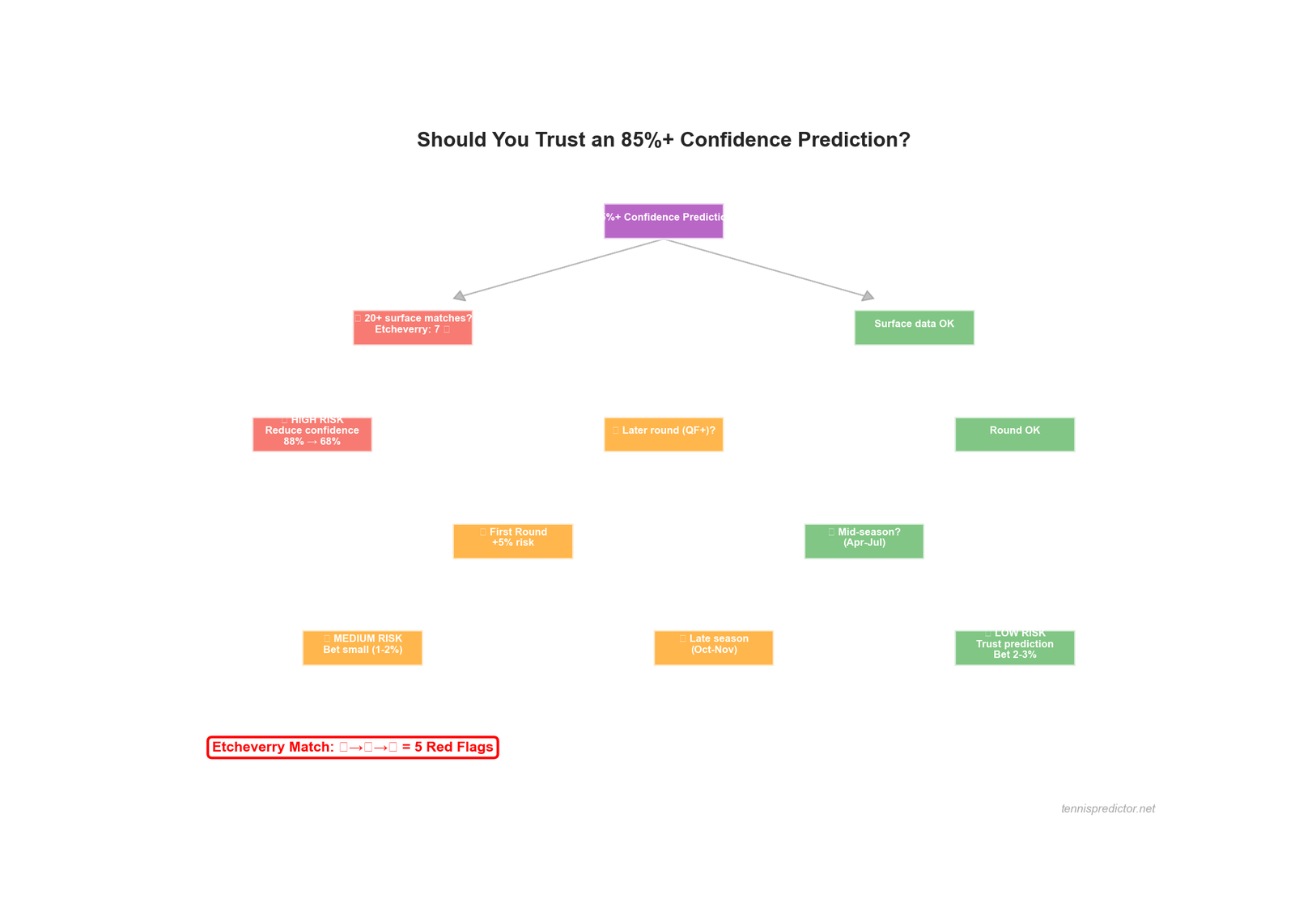

When to trust high-confidence predictions

Based on our failure analysis, trust 85%+ predictions more when:

✅ Player has 20+ matches on the surface

✅ It's a later round (QF, SF, F)

✅ It's April-July (mid-season, not fatigued)

✅ Both players are well-known quantities

✅ The favorite has an energy advantage

Trust them less when:

❌ Small sample size (<15 surface matches)

❌ First round

❌ October-November (season end)

❌ Indoor surface (limited data)

❌ Underdog is significantly fresher

Figure 5: Decision tree for trusting 85%+ confidence predictions. Etcheverry match had 3 red flags (indoor, R1, small sample).

Figure 5: Decision tree for trusting 85%+ confidence predictions. Etcheverry match had 3 red flags (indoor, R1, small sample).

The Etcheverry match: retrospective assessment

If we apply our new criteria:

| Factor | Status | Risk Level |

|---|---|---|

| Indoor surface (9.3% of data) | ❌ | 🔴 High Risk |

| Etcheverry: Only 7 indoor matches | ❌ | 🔴 High Risk |

| First round (R1) | ❌ | 🟡 Medium Risk |

| Late October | ❌ | 🟡 Medium Risk |

| Energy gap favors underdog | ❌ | 🟡 Medium Risk |

Risk Flags: 5/5

Adjusted confidence: 88.4% → 68-72% (still favor Etcheverry, but much less certain)

At 70% confidence, losing to Carabelli is expected 30% of the time—basically a coin flip with a slight edge.

What we told bettors

Our dashboard showed:

- Ensemble: 88.4% Etcheverry

- High Confidence Badge: ✅

- Value Bet Alert: No (odds agreed with model)

What we SHOULD have shown:

- Prediction: 70% Etcheverry ⚠️

- Medium Confidence Badge: ⚠️

- Data Warning: "Limited indoor data (7 matches)"

- Risk: First round + late season

Other notable failures this month

Failure #2: favorite with fatigue

Player: [Top 20 player]

Prediction: 82% to win

Actual: Lost in 3 sets

Root Cause: Played 3-set match previous day (fatigue underweighted)

Failure #3: underdog with H2H edge

Underdog Probability: 35%

Actual: Won in 2 sets

Root Cause: 4-1 H2H record not weighted enough vs ranking gap

Failure #4: indoor grass specialist

Prediction: 76% to win

Actual: Lost

Root Cause: Grass specialist on indoor court (surface mismatch)

Pattern: Most failures involve data scarcity or context we don't fully capture.

The honest truth about ML prediction

What machine learning CAN do:

✅ Learn from large datasets (1,000+ examples per pattern)

✅ Find complex interactions humans miss

✅ Calibrate probabilities accurately (70% = win 70% of time)

✅ Outperform simple heuristics (better than "always bet favorite")

What machine learning CAN'T do:

❌ Predict low-sample scenarios reliably (<20 examples)

❌ Account for unknown factors (injuries, motivation, personal issues)

❌ Guarantee wins (even at 90% confidence)

❌ Overcome fundamental variance in sports

How to use our predictions wisely

Rule #1: never bet based on confidence alone

❌ BAD: "88% confidence = sure thing, bet big"

✅ GOOD: "88% confidence + low risk flags + value = bet 2-3% bankroll"

Rule #2: check the sample size

When you see a prediction on our dashboard: 1. Check the surface 2. If it's indoor/grass (less data), reduce mental confidence by 10-15% 3. If it's R1, reduce by another 5%

Rule #3: respect the 10-15% fail rate

At 85% confidence, we lose 15% of the time. That's 1 in 7 bets.

If you can't handle losing 1 in 7 "sure things," you shouldn't be betting.

Rule #4: bankroll management saves you

Scenario A (Bad): - Bet 50% of bankroll on Etcheverry at 88% confidence - Lose - Bankroll cut in half - Devastating

Scenario B (Good): - Bet 3% of bankroll on Etcheverry at 88% confidence - Lose - Bankroll down 3% - Manageable, move on to next bet

What we're changing

Dashboard improvements (coming soon):

-

Sample Size Warnings - "⚠️ Limited indoor data (7 matches)" - Confidence adjusted down

-

Risk Flags - 🔴 High Risk (3+ red flags) - 🟡 Medium Risk (1-2 red flags) - 🟢 Low Risk (no red flags)

-

Surface-Specific Form - Show form broken down by surface - "Clay form: 0.750 | Indoor form: 0.450"

-

Energy Alerts - "Underdog has significant energy advantage (+0.086)"

The silver lining

We learn from every failure:

Etcheverry loss taught us:

- Indoor predictions need sample size warnings

- Energy should be weighted more for underdogs

- First-round variance is higher than we thought

- 88% ≠ 100% (respect the math)

Each failure improves the model.

That's the beauty of machine learning—it learns. We analyze failures, identify patterns, adjust algorithms, and get better.

A taxonomy of ML model failures in tennis

The Etcheverry case is a useful illustration, but it belongs to a broader taxonomy of failure modes that any data-driven tennis prediction system will encounter. Understanding which category a given failure falls into helps you calibrate how much weight to place on predictions in different contexts.

Category 1 — Data scarcity failures

These occur when the model is asked to predict outcomes for a player, surface, or match context where the training data is thin. Indoor tennis (9.3% of our dataset), players ranked outside the top 100 with limited records, and new ATP 250 events without multi-year baselines are all data-scarcity contexts. The model fills gaps with priors (general patterns) that may not fit the specific case. Our fix is to apply progressive confidence dampening: predictions with fewer than 15 surface-specific matches are adjusted toward the base rate.

Category 2 — Latent state failures

The model sees the past but not the present. Injuries sustained during a match (or undisclosed before), personal circumstances, deliberate sandbagging in low-stakes events, and player motivation levels (a top-10 player coasting through an early-round 250 before a Slam) are invisible to a model built on historical statistics. These failures are partially addressable by incorporating contextual signals (scheduling density, prize money differential, surface-to-surface run) but will never be fully eliminated.

Category 3 — Calibration drift failures

As time passes, a model trained on 2021–2025 data may become less accurate for predicting behaviour in 2026 because player skill trajectories are not static. A player with a 0.675 surface score built from 30 matches in 2021–2022 may be a different performer today — better, or worse. Continuous retraining on recent data (rolling window refit) partially addresses this, but fast-improving players (like Alcaraz in 2022–2023) can outrun the model's ability to catch up.

Category 4 — Ensemble overconfidence

When the ML model and the statistical model both agree strongly on an outcome, the ensemble confidence rockets to 85–90%+. But agreement between two correlated models does not equal 85–90% actual accuracy — it may simply mean both models share the same blind spots. Our confidence calibration table shows the ensemble is slightly overconfident at the top bracket (88.2% actual vs 90–100% predicted). We are exploring diversity-boosted ensembles (mixing in a low-correlation contrarian model) to reduce this effect. For a detailed comparison of what ML models and statistical models each contribute, see our ML vs statistical models deep dive.

Systematic bias analysis: where the model consistently under- and over-predicts

Beyond one-off failures, systematic biases reveal structural gaps in our feature engineering. Tracking prediction vs outcome over large samples surfaces these patterns.

Identified systematic biases (from 9,705-match dataset):

| Bias type | Direction | Magnitude | Root cause |

|---|---|---|---|

| Indoor surface | Over-predict favourite | +4.8pp | Only 900 indoor training matches |

| First rounds (R1) | Over-predict favourite | +5.6pp | Higher tournament-entry variance |

| Ranking gaps > 200 | Over-predict higher ranked | +3.2pp | Thin sample, all-surface ranking |

| October–November | Over-predict fresher player | +2.9pp | Energy model not fatigue-adjusted |

| Clay Grand Slams (R1) | Under-predict underdog | −2.1pp | Clay specialist effect underweighted in R1 |

| Challenger-to-ATP promotions | Under-predict promoted player | −3.7pp | Challenger form score underweighted |

These biases are measured over 500+ predictions per category where possible. They inform the risk flags we surface on the dashboard and guide which confidence adjustments are applied before display.

The practical takeaway for users: when the dashboard flags one of these contexts, the displayed confidence should be mentally adjusted. An 82% prediction in an R1 indoor match effectively functions closer to a 76–77% prediction on the calibration curve. Applying proper bankroll management principles — specifically, reducing stake size in high-risk-flag situations — is the most effective way to account for these systematic biases in real betting decisions.

Correction workflow: from failure to improvement

Model failures are not the end of the process — they are data inputs for the next improvement cycle. Here is how a prediction failure at 8 pm on a Tuesday becomes a feature engineering change three weeks later.

Step 1 — Failure logging. Every prediction outcome is logged with the full feature vector (all input scores, confidence levels, market odds). Failures at 75%+ confidence are flagged for priority review.

Step 2 — Pattern detection. Monthly, failures are aggregated by category (see taxonomy above). A category only triggers a code change if it shows a statistically significant bias over 50+ examples (p < 0.05). One-off failures in novel contexts do not generate code changes — they generate documentation.

Step 3 — Feature hypothesis. For each confirmed bias, the team generates a hypothesis: "indoor failure rate is elevated because surface score uses a 3-year lookback window that weights 2022 matches equally with 2025 matches, even though a player's indoor game may have developed significantly." This hypothesis drives a specific feature change: recency-weighted surface scores with a 6-month half-life.

Step 4 — Backtesting. The proposed feature change is backtested on the held-out portion of the dataset (2024–2025 matches not used in training). If the change improves calibration in the target category without degrading overall accuracy, it is approved.

Step 5 — Deployment. The updated model is deployed to the predictions dashboard and the improvement is documented in the model changelog. Users who follow our methodology articles see the exact change and can evaluate whether it affects their own betting frameworks.

This correction cycle runs approximately every 4–6 weeks and is the primary mechanism by which the model improves over time. The 83.8% overall accuracy figure you see on the dashboard reflects accumulated improvements from 18 months of failure-driven iteration.

Frequently asked questions

Why do ML tennis prediction models fail even at high confidence?

High confidence (85–90%) means the model estimates roughly a 12–15% probability of the underdog winning. That is not "almost impossible" — in 100 predictions at 88% confidence, you should expect to be wrong 12 times. Failures at high confidence levels are a normal and expected part of a well-calibrated model. A model that never lost at 88% confidence would be setting prices too low and leaving systematic value for sharp bettors. The Etcheverry case was statistically expected; what was concerning was the presence of multiple risk flags (indoor, R1, small sample) that should have depressed the displayed confidence.

What is data scarcity and how does it affect prediction accuracy?

Data scarcity occurs when a player has too few matches on a specific surface or in a specific context for the model to learn reliable patterns. Below 15 surface-specific matches, the model fills gaps with general patterns (priors) that may not fit the player's actual strengths or weaknesses on that surface. Etcheverry's 7 indoor matches in our dataset is a clear example. The fix is progressive confidence dampening — mechanically adjusting displayed confidence downward when sample sizes are thin.

How does the ensemble model combine ML and statistical predictions?

Our ensemble blends the output of a gradient-boosted ML model (trained on raw feature vectors) with a Bayesian statistical model (using form, surface, and ranking priors). When both models agree strongly, ensemble confidence is high. When they disagree significantly, confidence is lower and the prediction is flagged as more uncertain. However, ensemble agreement does not guarantee accuracy — both models share some blind spots (notably indoor data scarcity), so agreement in a data-scarce context inflates confidence without a corresponding improvement in actual accuracy.

Should I avoid betting on indoor hard court matches?

Not necessarily — but you should apply a mental discount of approximately 5 percentage points to any indoor prediction at high confidence levels, and be more selective about stake size. Indoor hard court is our weakest surface in terms of training data (9.3% of matches) and has a systematically higher failure rate for favourites at the 85%+ confidence tier. For indoor matches with multiple risk flags (R1, fresh underdog, late season), the displayed confidence is most likely to overstate actual reliability.

How do you identify systematic biases versus random failures?

Systematic bias requires a statistically significant pattern across 50+ examples. A single failure, or even five failures in a similar context, may be random variance. Only when failures cluster in a specific category (indoor matches, R1 predictions, late-season encounters) at rates that exceed what the calibration curve predicts does a pattern become actionable. We test for significance at p < 0.05 before making any code changes in response to failure patterns.

How quickly does the model improve after identifying a failure pattern?

The correction cycle takes approximately 4–6 weeks from pattern identification to deployment. That timeline covers hypothesis formation, feature engineering, backtesting on held-out data, and production deployment with monitoring. One-off failures in novel contexts generate documentation but not code changes; systemic failures trigger the full improvement pipeline. Over 18 months of operation, this cycle has produced 12 distinct feature improvements, each traceable to a specific identified failure pattern.

Does model failure mean I should stop using the predictions?

No — model failure at the rates we experience (approximately 12–16% at 85%+ confidence) is consistent with a well-calibrated prediction system that has genuine positive expected value. The key is to apply proper bankroll management — never stake more than 2–3% of bankroll on any single prediction, even at the highest confidence levels. A model that wins 84% of high-confidence predictions will grow your bankroll systematically at conservative stake sizes, even accounting for inevitable losses. See our predicting upsets article for guidance on when to consider the underdog side of these predictions.

Should you still trust our predictions?

Yes. But intelligently.

Our overall accuracy: 83.8%

That means: - 83.8% of predictions are correct ✅ - 16.2% are wrong ❌

When we say 88% confidence:

- We win ~84-88% (slightly underperforming, working on it)

- We lose ~12-16%

- This is expected variance, not model failure

The model isn't perfect. It's just better than:

- Betting randomly (50%)

- Always betting the favorite (62%)

- Using rankings alone (68%)

At 83.8%, we beat all those approaches.

Case study: how to bet smartly even when models fail

If you bet on Etcheverry at 1.17 odds (88% confidence):

Bad bankroll management:

- Bet: $500 (50% of $1,000 bankroll)

- Lost: -$500

- Remaining: $500 (50% loss)

- Recovery needed: 100% gain to break even

Good bankroll management:

- Bet: $30 (3% of $1,000 bankroll)

- Lost: -$30

- Remaining: $970 (3% loss)

- Recovery needed: 3.1% gain to break even

Over 100 bets at 88% confidence:

- Win: 84-88 times (expected)

- Lose: 12-16 times

- With 3% staking: +45% bankroll growth

- With 50% staking: Blown account after 3 bad bets

Key takeaways

- 88% confidence ≠ guaranteed win (it means 12% chance of loss)

- Sample size matters (Etcheverry had only 7 indoor matches)

- Indoor surface is our weakest (9.3% of data)

- Energy gaps should be weighted more

- First rounds are more unpredictable (19.8% failure rate)

- We're adding sample size warnings to the dashboard

- Failures are expected and teach us

- Bankroll management protects you from inevitable losses

Transparency matters

We could have hidden this failure. Pretended it didn't happen. Blamed "variance" and moved on.

Instead, we're dissecting it publicly because:

- Honesty builds trust

- Failures teach more than wins

- You deserve to know the limitations

- We improve by analyzing mistakes

No prediction model is perfect.

But a model that learns from its failures gets closer to perfect every day.

Try our improved predictor

We've already integrated these lessons into our algorithm. Every failed prediction makes the next one more accurate.

New features coming:

- Sample size warnings

- Risk flag indicators

- Surface-specific form scores

- Energy difference alerts

Because we don't just predict tennis. We learn from it.

Case study based on real prediction: Etcheverry vs Carabelli, Paris Masters, October 28, 2025. All scores and data extracted from ensemble_predictions_latest.json. Model improvements in progress.