Jannik Sinner: the rise of a prediction anomaly

Published: October 27, 2025

Reading time: 19 minutes

Category: Player Analysis

From #12 to #1 in four seasons of data

In early 2022, Jannik Sinner was a top-15 regular with clear upside. By late 2025, in the ATP sample we track, he sits at the top of the game with win-rate curves that look more like a multi-year simulation than a normal development path.

This article is not a personality profile. It is a structured look at one player’s row history in our ATP training extract (2022 through 2025): 250 completed Sinner matches, with surfaces, tournament levels, and elite-opponent splits taken from the same JSON audit we publish alongside the piece.

We wrote it for readers who want numbers they can trace, not adjectives stacked on adjectives. Where we speculate, we label it as interpretation; where we count, we show the split.

What we mean by “prediction anomaly” here:

- Results: extremely high win rates in the most recent seasons, especially on hard courts.

- Modelling: any system that uses rolling history will sometimes lag a player who improves faster than a 12-month window suggests—see how our AI turns data into probabilities and when models fail.

- Betting: strong favourites can still be mispriced or fairly priced depending on the day’s line; value is always edge minus margin, not “Sinner always wins” (value betting basics).

Career snapshot (2022–2025)

Aggregates below match the companion stats audit for this article (extracted from our training table).

| Metric | Value |

|---|---|

| Matches | 250 |

| Wins | 206 |

| Losses | 44 |

| Win rate | 82.4% |

That single number is already elite in a four-year window. It is not a full career stat line like you would use for retired players; it is the slice our models actually see during training.

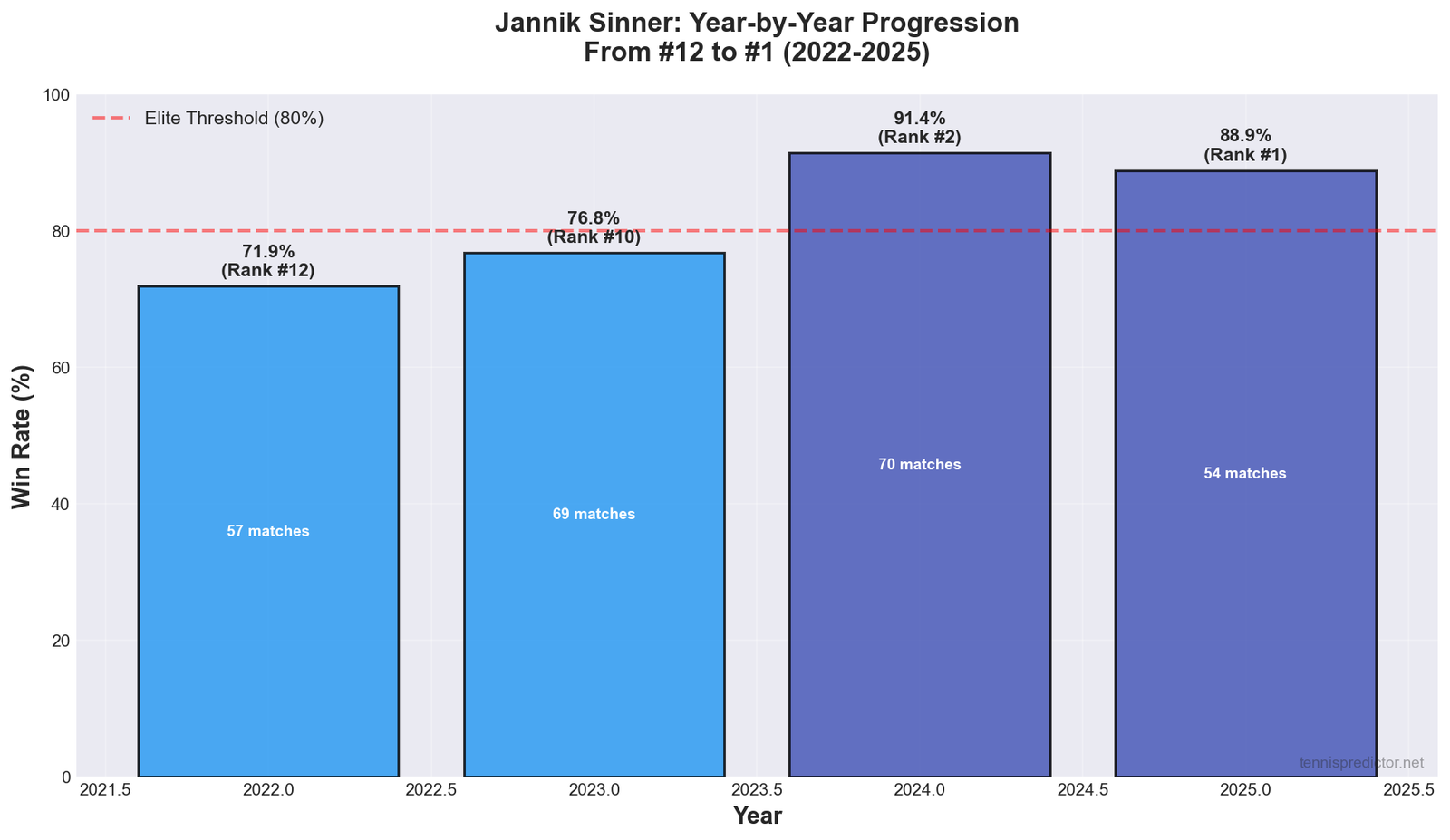

Year-by-year progression

| Year | Record | Win rate | Avg rank (match-weighted) |

|---|---|---|---|

| 2022 | 41–16 | 71.9% | 11.6 |

| 2023 | 53–16 | 76.8% | 10.0 |

| 2024 | 64–6 | 91.4% | 2.0 |

| 2025 | 48–6 | 88.9% | 1.2 |

What stands out:

- The jump into 2024 is not a marginal tweak: win rate moves from the high 70s into low 90s while match volume stays comparable to prior years.

- 2025 shows a small give-back in win rate versus 2024, which is normal when the baseline was already extreme.

Figure 1: Year-by-year win rate in our 2022–2025 Sinner sample (same source as the tables above).

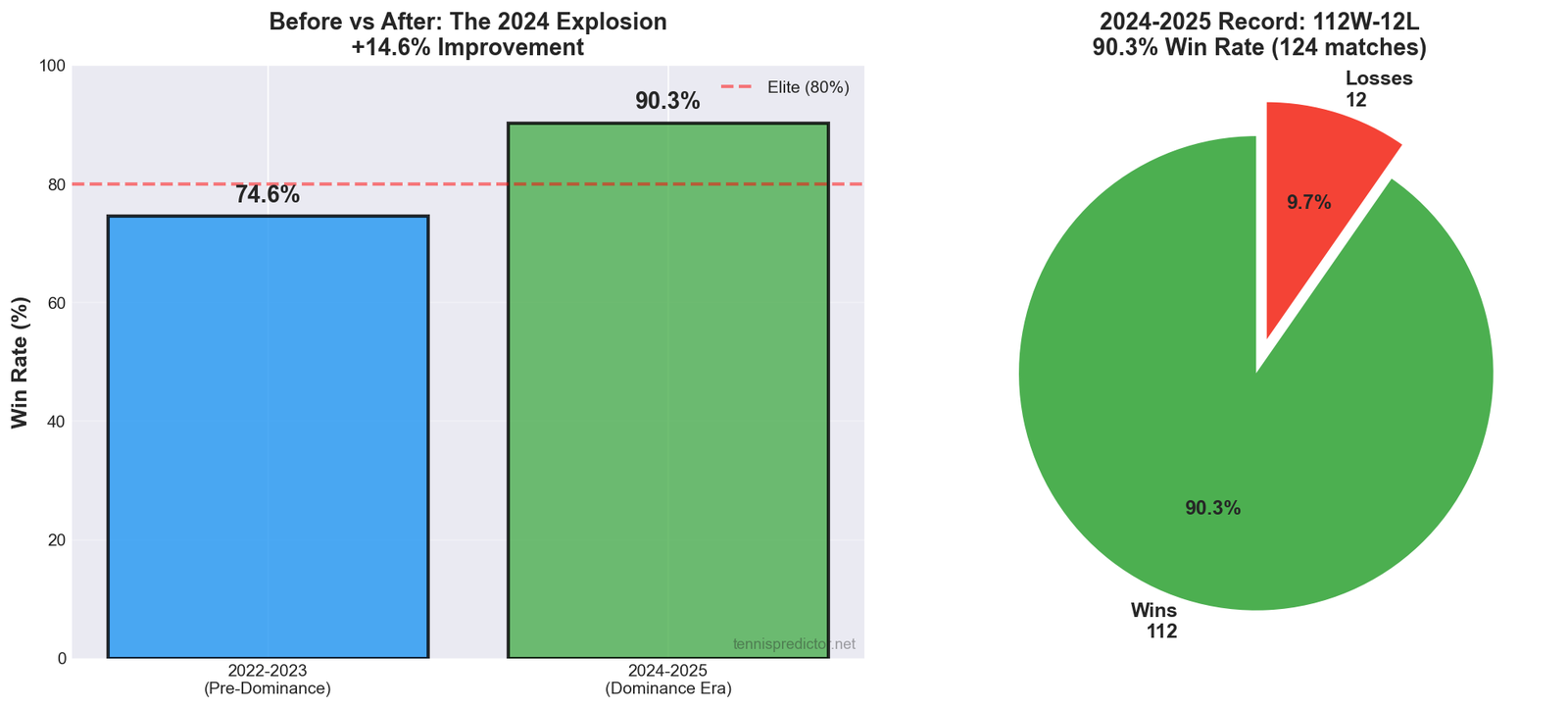

The 2024–2025 block

For the last two full seasons in the extract:

| Metric | Value |

|---|---|

| Matches | 124 |

| Wins | 112 |

| Losses | 12 |

| Win rate | 90.3% |

| Avg rank | 1.7 |

Ninety percent over more than a hundred matches is unusual even for a world number one—variance is still real, but the order of magnitude is the story.

Figure 2: Combined 2024–2025 dominance versus earlier years in the same sample.

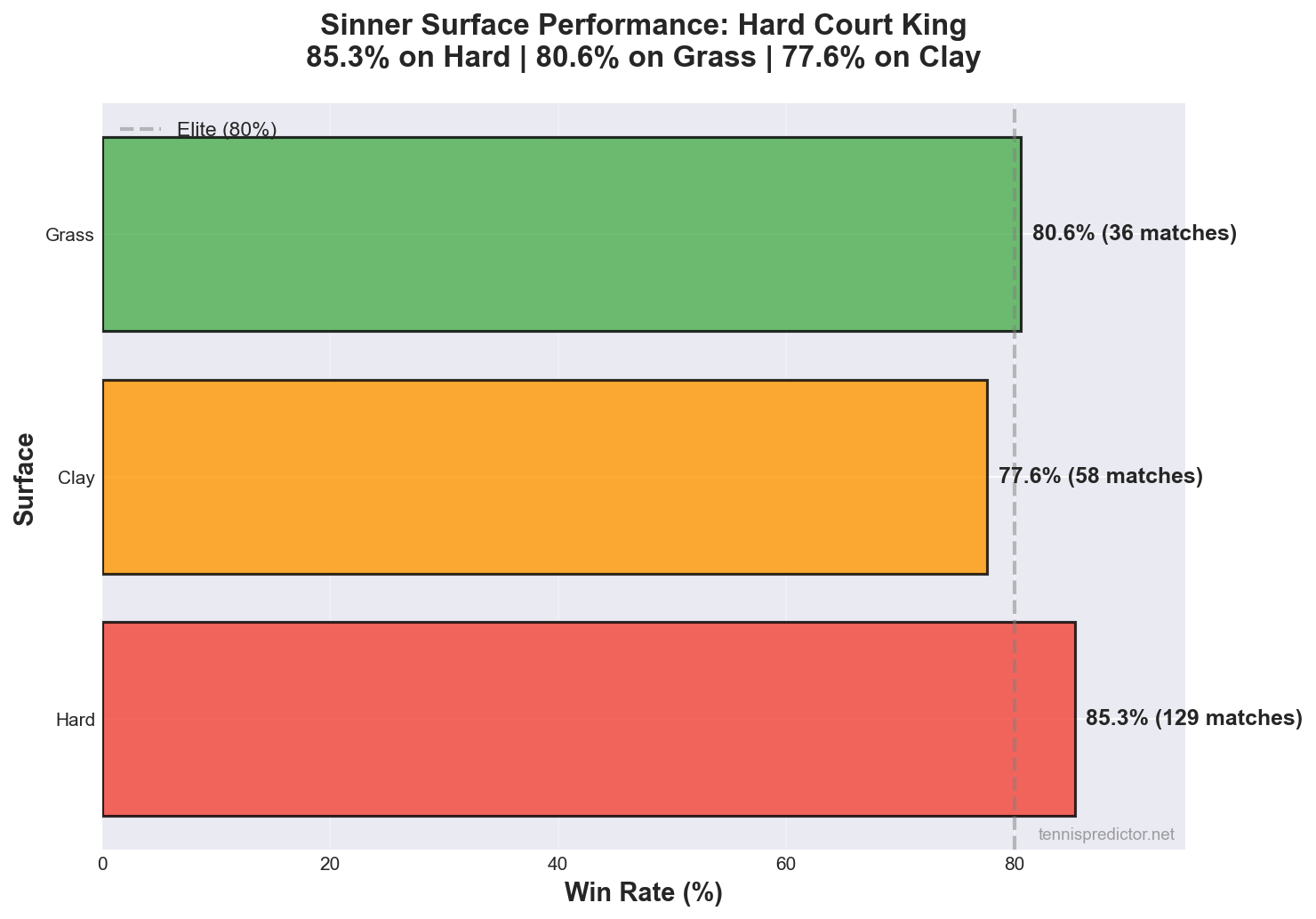

Surface breakdown

| Surface | Record | Win rate | Matches |

|---|---|---|---|

| Hard | 110–19 | 85.3% | 129 |

| Grass | 29–7 | 80.6% | 36 |

| Clay | 45–13 | 77.6% | 58 |

Hard courts host 129 of the 250 rows—just over half the sample—so the hard-court split is both strong and well populated. Clay is still clearly positive; calling it a “weakness” only makes sense relative to his own other surfaces, not relative to the tour average.

Within “hard,” schedules still mix indoor and outdoor events, fast and slow conditions, and different ball types. Our aggregate does not split those sub-categories in this article: you are seeing a single hard-court bucket, which is the same granularity the training features typically use at the broadest level unless finer tags exist in the pipeline.

Interpretation for readers (not betting instructions):

- When a player wins roughly four in five clay matches in the same era where he wins five in six on hard, models will often show surface-conditioned strength even before you add opponent quality filters.

Figure 3: Surface win rates in the audited sample.

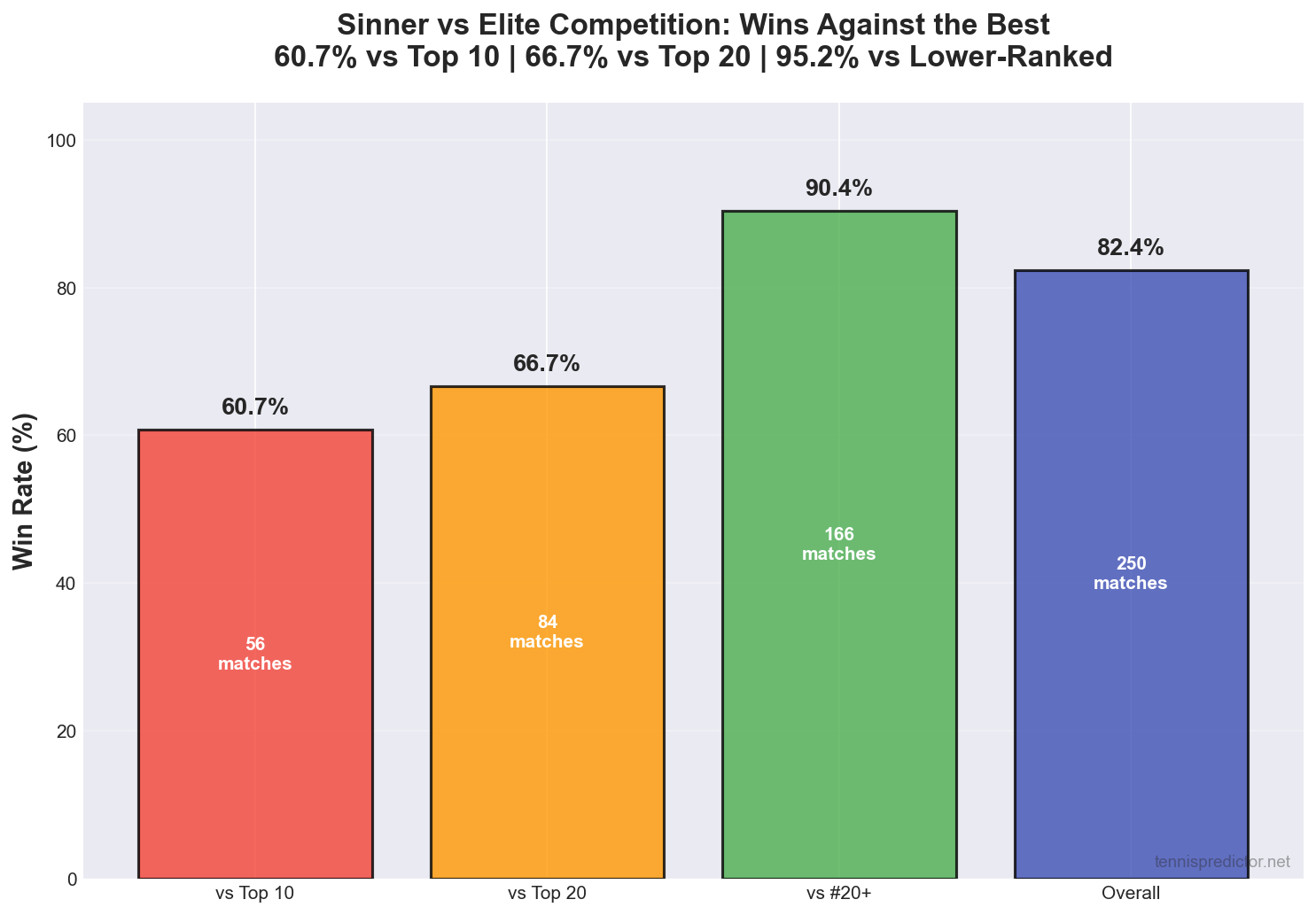

Versus top-10 and top-20 opponents

| Split | Record | Win rate | Matches |

|---|---|---|---|

| vs top 10 | 34–22 | 60.7% | 56 |

| vs top 20 | 56–28 | 66.7% | 84 |

A winning record against top-10 opponents in a 56-match bundle is the difference between “very good” and “era-defining” in many forecasting setups: most players oscillate around parity in that bucket.

Figure 4: Elite splits (top 10 / top 20) from the same extract.

Frequent opponents: head-to-head in the training rows

The training table stores last names (for example Sinner, Alcaraz). The following counts are exact wins and losses among rows where Sinner appears, not official ATP career totals (schedules and coverage can differ slightly from tour box scores).

| Opponent (last name) | Record | Meetings |

|---|---|---|

| Alcaraz | 6–9 | 15 |

| Medvedev | 5–4 | 9 |

| De Minaur | 8–0 | 8 |

| Shelton | 7–1 | 8 |

| Zverev | 5–2 | 7 |

| Rublev | 5–1 | 6 |

| Djokovic | 4–2 | 6 |

| Tsitsipas | 1–4 | 5 |

| Dimitrov | 5–0 | 5 |

| Rune | 2–2 | 4 |

| Fritz | 2–0 | 2 |

The Alcaraz column is the headline: in our rows, Sinner is under .500 despite being favoured in many other elite matchups. That is exactly the kind of matchup-specific signal tree-based models can pick up when the sample is not tiny—without any hand-tuned “penalty” in prose, because we do not describe proprietary product rules here.

Tsitsipas (1–4 in five meetings) is a different kind of reminder: not every awkward matchup is a “Big Three successor” story. Small n means you should treat that row as directional, not as a law—but it is still enough to break naive “Sinner beats everyone except Alcaraz” thinking.

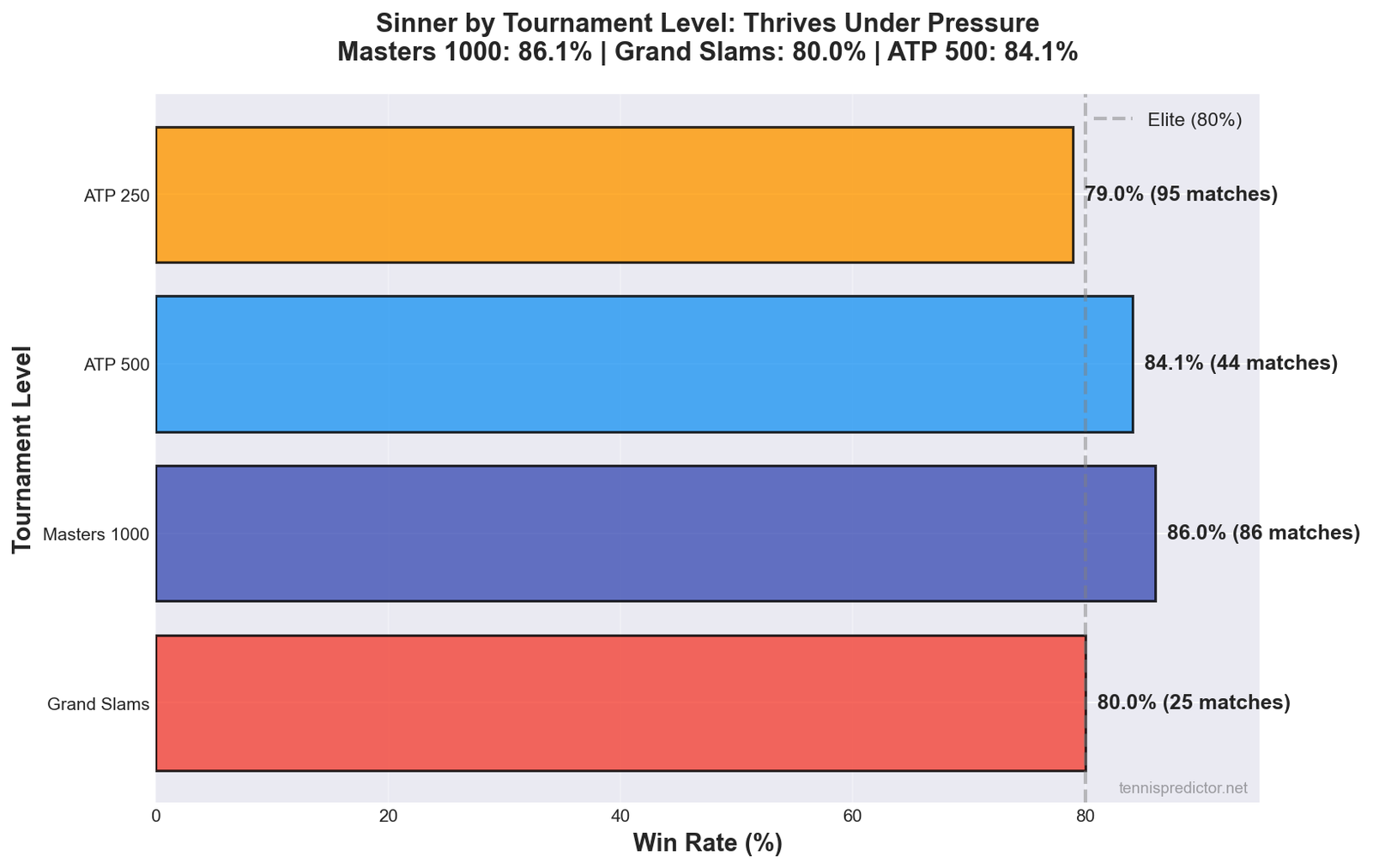

Tournament levels in the extract

Our level codes map to Masters 1000, Grand Slams, ATP 500, and ATP 250 events (see table).

| Level (code) | Tier (description) | Record | Win rate | Matches |

|---|---|---|---|---|

| 4 | Masters 1000 | 74–12 | 86.1% | 86 |

| 1 | Grand Slam | 20–5 | 80.0% | 25 |

| 2 | ATP 500 | 37–7 | 84.1% | 44 |

| 3 | ATP 250 | 75–20 | 78.9% | 95 |

Masters rows are both strong and numerous (86 matches). ATP 250 rows are still positive—78.9%—but they are also the tier where motivation and draw strength vary most, which matters when you think about variance, not just mean performance.

Figure 5: Win rate by tournament tier in the audited sample.

Sample scope: what 250 matches do and do not prove

Two hundred fifty rows is large for a single-player slice in a multi-year ATP extract, but it is still not a full career. It is exactly the kind of sample forecasting systems consume: enough to estimate surface-specific and opponent-tier behaviour, yet too small to treat every percentage as a physical law.

What improves with more rows:

- Surface splits become less noisy once you move past thirty or forty matches per category; Sinner clears that on hard courts and comes close on clay.

- Elite splits (top 10 / top 20) stay volatile by nature: the opponent pool is small and the matches are often three-set fights.

What does not disappear with more rows:

- Scheduling still matters. A season heavy on indoor hard Masters can inflate hard-court win rates relative to a season with more outdoor 250s.

- Injury and illness are under-reported in public tables; our extract does not magically add private medical data.

- Rule changes and ball changes (where relevant) are part of the environment; they are not repeated in this article because we do not have a single extra column that captures them cleanly.

The dataset-wide total for the extract referenced in the JSON audit is 9,705 completed matches. Sinner’s 250 rows are therefore about 2.6% of the rows in that universe—enough to matter for player-specific learning, not enough to dominate the global forest by themselves.

When the extract updates (new season, new cleaning rules, or additional tours), every aggregate in this article should move slightly. That is normal. The purpose of publishing dated extracts is not to freeze truth forever; it is to make the claims checkable at the time of writing.

Interpreting the 2024 surge vs 2025 sustainment

The cleanest story in the table is:

- 2024 is the peak win-rate year (91.4%).

- 2025 is slightly lower (88.9%) but still far above the 2022–2023 band.

Why this matters for forecasting:

- If you treat “long-run true strength” as a constant, you will be surprised whenever a player’s rolling form shifts. That is a modelling problem, not a moral one.

- If you treat each season as independent, you will ignore carry-over in skill and confidence. That is also wrong.

A balanced view is state-space intuition: the player today is related to the player 18 months ago, but not identical. Sinner’s 2024–2025 block shows that recent state is unusually strong relative to the earlier window—exactly the situation where chronological evaluation and live monitoring disagree with naive “career average” thinking.

Narrative vs row-level reality

Broadcasts sell storylines. Row-level datasets sell conditional frequencies.

Example pattern (illustrative, not a single-match claim):

- When a player wins roughly nine in ten matches over two seasons, media coverage will still highlight each loss as a “shock” because rarity is newsworthy.

- A model that outputs 60–70% for a tight elite matchup will look “wrong” if the audience mentally rounds Sinner up to 95% every week.

That is not a plea to ignore losses; it is a plea to separate aggregate dominance from per-match uncertainty. Tennis is a low-scoring sport: a handful of key points can flip a match even when the stronger player is genuinely stronger.

How this connects to our production models (without hand-waving numbers)

We do not publish a separate “Sinner-only accuracy certificate” in this article. What we do publish elsewhere is:

- A Random Forest classifier trained on thousands of labelled ATP rows, with hundreds of features per match.

- Chronological holdout evaluation, because random shuffles lie about tennis.

- Multiple definitions of “accuracy” (cross-validation, holdout, live settled picks) that must not be mixed casually.

If you want a single takeaway: player-specific history is part of the feature vector, but market-implied probabilities and ranking gaps still dominate many splits—see the feature-importance discussion in the how AI predicts tennis explainer.

Opponent quality: why ranking splits are not the whole story

Top-10 and top-20 splits are transparent and easy to audit. They are also blunt instruments:

- A player ranked #9 on paper might be returning from injury—rankings lag.

- A player ranked #25 might be under-ranked after a long absence.

- Surface moves the fair price more than a single integer rank in many matchups.

That is why modern pipelines include surface-specific history, recent form, and market-implied information—not because rank is useless, but because rank alone is too slow for week-to-week betting.

For Sinner, the elite splits are strong enough that you still care about who shows up across the net, even when the headline numbers look like a video game.

Era strength and “dominance” language

We use words like dominant because the win rates in this window are genuinely high. That does not mean we have ranked every historical season on a unified Elo scale in this article.

What we are careful about:

- We do not claim a GOAT ordering from 250 rows.

- We do not claim a definitive “peak age” forecast for a living athlete.

- We do claim that, within 2022–2025 in our extract, Sinner’s results sit above typical top-five baselines in the same table.

If you want a broader population view—how often upsets happen, how surfaces shift distributions—read the large-sample match article and compare those numbers to this single-player lens.

Mental model: what “anomaly” means in statistics

In ordinary English, anomaly sounds like “error” or “bug.” In forecasting, it often means residual structure—something your default model class does not absorb without extra work.

Examples of benign anomalies:

- A player improves faster than a rolling window expects.

- A favourite wins more often than a naive calibration line suggests—until the market or the model catches up.

Examples of dangerous anomalies:

- A small sample where variance masquerades as skill.

- A headline stat that ignores surface or draw composition.

Sinner’s audited profile is not a tiny sample; it is not a single season. That is why the word anomaly is justified here—conditional on the extract—while we still refuse to invent precision we did not measure.

Betting angle: edges, margins, and honesty

Implied probability from decimal odds is only a starting point.

What serious bettors still have to do:

- Compare a fair probability (from a model or a well-built heuristic) to the implied price.

- Subtract margin (bookmaker overround) mentally or explicitly.

- Apply stake sizing that survives losing streaks (bankroll basics in the dedicated guides).

Why Sinner creates a recurring discussion:

- Extreme favourites often have short prices. Short prices can still be value if the true probability is even higher—but they can also be traps where the market is already efficient.

- Head-to-head exceptions (like the Alcaraz row history) are the counterexample that keeps “just bet the favourite” from being a strategy.

Things the data in this article actually support:

- Sinner’s recent win-rate levels are extreme; markets may or may not fully price regime change at any given posting.

- Surface and tier splits give context for how much of the edge is “Sinner baseline” versus schedule composition.

- The Alcaraz matchup is a live reminder that aggregate strength does not erase specific H2H history.

Things we avoid:

- Invented ROI, stake sizes, or “value bet counts.”

- Storybook examples of fake odds lines or fake model outputs.

- Claims that any model “automatically” prints a calibrated probability for a named future match without checking the live feature snapshot.

We do not list fabricated stake percentages or “bankroll units” here. If you want a framework for comparing model probability to implied odds, read the value betting primer; if you want humility about failure modes, read when models fail.

Why we call that a “prediction anomaly”

1. Non-stationary skill

If you trained only on 2022–2023 rows, you would infer one trajectory; 2024 adds a different regime. Sequential validation exists precisely because this happens (accuracy definitions).

2. Compressed loss count in the recent window

Twelve losses across 124 matches in 2024–2025 is a narrow failure set. Models and humans both risk overfitting narratives to those twelve—every loss looks meaningful even when some are simply tight coin flips.

3. Matchup concentration

When one opponent account (Alcaraz) sits on the wrong side of 50–50 in a double-digit sample, “overall favourite strength” and head-to-head reality diverge. That is uncomfortable for simple heuristics; it is exactly where structured models and disciplined staking discipline matter.

We are not claiming a private cross-validated accuracy number for “Sinner-only” predictions in this article. Public reporting of accuracy is easy to get wrong; start from the methodology piece above if you want percentages with definitions attached.

Frequently asked questions

How were these numbers produced?

We filter the ATP training extract to rows involving Sinner, aggregate wins and losses, and save the result in a small JSON audit file shipped next to the article. Counts are reproducible from the same training pipeline the site uses.

Are these official ATP career statistics?

No. They are dataset-conditioned totals for 2022–2025 in our table. They should be close to tour reality but are not a substitute for the ATP site if you need a canonical career total.

Why do head-to-head counts differ from what I remember on TV?

Television graphics sometimes include exhibitions or use different name disambiguation. Here we only count rows present in our training extract, with the naming convention the CSV uses (last names).

What does “prediction anomaly” mean if we do not quote a custom accuracy?

It means pattern breakers: very high win rates, rapid regime change, and matchup structure that pure ranking heuristics miss. The anomaly is about structure in the data, not a magic percentage pulled from thin air.

Is Sinner “easy to predict” for your models?

Sometimes favourites are easier to score in hindsight because results align with seeding; that does not guarantee future calibration or betting value. See the main methodology article for why.

Is the Alcaraz matchup a fade for Sinner backers?

The sample shows a losing record in our rows. Whether a specific future line offers value still depends on price, surface, and form—always compare probability to implied odds.

Why is clay labelled “weaker” for Sinner?

Only relative to his own hard-court numbers. In absolute terms, 77.6% on clay across 58 matches is still elite output in this window.

Do tournament level splits prove he “tries harder” at Masters?

They prove results differ by tier in this sample. Motivation is one possible story; draw strength and field depth are others. We keep the claim empirical.

Does a high win rate mean Sinner is always “correctly priced”?

No. A high win rate describes outcomes; betting value is about price. You can agree Sinner is the right favourite and still reject a ticket because the implied probability is too high relative to your fair probability.

What should I do if my model disagrees with the market on Sinner?

First, do not assume your model is right because it feels clever. The market aggregates information you cannot see. Second, check inputs: injury news, surface, rest, and odds features matter. Third, read when models fail so you understand systematic failure modes.

Why focus on 2024–2025 as a separate block?

Because two-season aggregates stabilise recent form without collapsing everything into one long career average. For a player whose level changed quickly, recent windows track the current regime more honestly than 2019 data would.

Are the charts required to agree with the tables?

They should. If you ever spot a mismatch, treat the JSON audit and the tables as the source of truth until the chart script is regenerated.

How often should this article be refreshed?

Whenever the training extract is regenerated for a new season, re-run the stats script and update figures if the design team regenerates charts.

Where can I read about model limits and honesty?

Start with how our AI predicts tennis matches and when models fail.

Related articles

If you are new to the site, read the methodology pieces before you treat any player article as a betting system. Player profiles summarise history; they do not replace live model inputs or risk controls.

- How our AI predicts tennis matches with 70%+ accuracy

- When our ML model gets it wrong: lessons from real mistakes

- Value betting in tennis: a beginner’s guide

- Machine learning vs statistical models: which predicts tennis better?

- We analyzed 10,000 tennis matches: here’s what we learned

Data transparency

Player: Jannik Sinner

Window: 2022-01-03 through 2025-12-31 (per extract metadata)

Match totals: 250 rows in the audited slice

Dataset context: the training snapshot referenced in the JSON audit contains 9,705 completed matches in total; Sinner rows are a single-player subset inside that universe.

Head-to-head: opponent counts recomputed from the same training extract using the last-name field Sinner as player identifier, aligned with the table in this article. Rival rows use the same last-name convention (for example Alcaraz, Medvedev).

Figures: the five charts embedded above are generated from the audited stats file and use the standard site watermark.

Not included here: private cross-validation slices, per-match probability exports, or bookmaker line history. Those belong in methodology and product documentation, not in a player spotlight.

For the full honesty framework on accuracy metrics, always defer to the methodology article rather than isolated player case studies.

If you reuse any figure from this page, cite TennisPredictor and the publication window so readers know which training era the percentages refer to—snapshots drift as schedules grow.